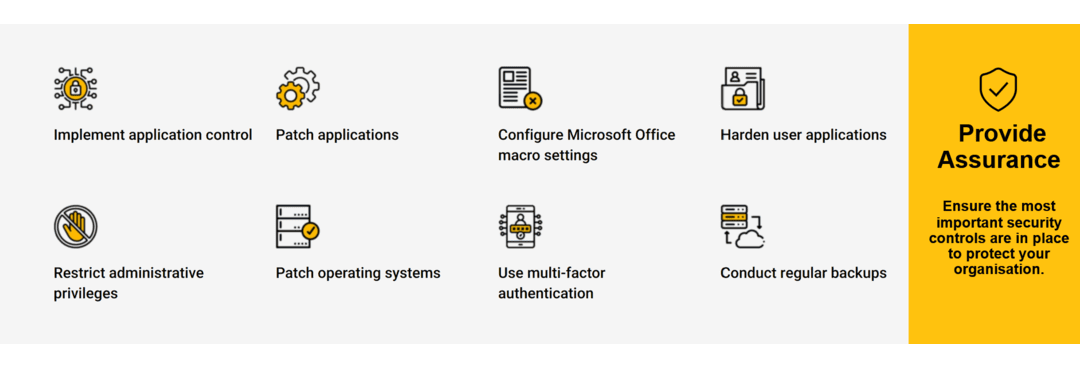

Australia’s defence industry is undergoing a security transformation. The Defence Industry Security Program (DISP) saw pivotal cyber compliance reforms between September 2024 and July 2025, designed to confront escalating threats and align the supply chain with government best practices. Whether you’re a long-time DISP member or a new applicant, understanding what’s changed—and why it matters—is crucial to protecting your eligibility for tenders, securing sensitive information, and building business resilience. The latest DISP reforms have reshaped the baseline for cyber security in the defence sector: Until October 2024, DISP required only the “Top 4” Essential Eight strategies. After the 2024 update, all DISP applicants and renewals must implement and maintain the full Essential Eight mitigation strategies, at Maturity Level 2—addressing threats from opportunistic, targeted, and advanced adversaries. The reforms require regular, detailed cyber security reporting, including a robust questionnaire at application and renewal. Members must actively manage controls (not just implement them “once”) and keep improving maturity over time. DISP now aligns directly with the Australian Signals Directorate (ASD) recommendations, the Protective Security Policy Framework (PSPF), and upcoming legislative and grant program priorities focused on the sovereign defence base. Meeting DISP’s new cyber and assurance standards is now mandatory for all serious defence suppliers. Non-compliance can mean losing access to Defence contracts, heightened audit risk, and reputational damage. Advanced persistent threats, ransomware, and supply chain compromise are now mainstream risks for all defence contractors. By uplifting cyber security maturity, companies protect not only their own data but also safeguard Australia’s national interests. It’s no longer acceptable to assess once and ‘set and forget’. The expectation is for continual improvement and regular re-assessment aligned to evolving threats and requirements. The Essential 8 consists of eight strategies developed by the ASD to help organisations defend against, respond to, and recover from cyber threats: DISP now mandates all eight strategies—not just “the Top 4”—at Maturity Level 2. This means more than technical implementation: it involves proactive management, routine testing, and regular validation of protective measures. At Virtuelle Group, we support organisations through the entire DISP and Essential 8 journey: Contact us today to schedule a personalised DISP and Essential 8 compliance review. Let’s turn regulatory obligation into your strategic advantage.. What Changed in the DISP in 2025?

1. Expansion to Full Essential Eight:

2. Stronger Requirements for Compliance:

3. Alignment with National Priorities:

Why Do These DISP Changes Matter?

1. Compliance Is Business-Critical:

2. Threats Are Evolving:

3. Ongoing Uplift Is Essential:

What Is the Essential 8—and Why Does It Matter Now?

Industry Best Practices

How Can Virtuelle Group Help —Your DISP Compliance Partner

Ready to Turn Compliance Burden Into Business Advantage?

Don’t leave DISP compliance (or your organisation’s resilience) to chance. The new expectations demand leadership, not just checklists.

Category: News, Updates and Features

Accelerating ACSC Essential 8 Compliance: Fast-Track Your Cybersecurity Resilience

Is your organisation ready to tackle the next major cyber threat—or are unseen gaps putting your business, customers, and reputation at risk?

The Australian Cyber Security Centre’s (ACSC) Essential Eight is no longer just a standard—it’s the defence line separating businesses that thrive from those that fall to cyber incidents. In a fast-evolving threat landscape, delayed compliance can mean lost contracts, damaged trust, and higher insurance costs. Yet, many Australian organisations struggle with slow, piecemeal implementations or overwhelmed IT teams. That’s where Virtuelle makes the difference.

Why Accelerate Your Essential 8 Compliance?

Understanding why speed matters will empower your organisation far beyond just “ticking the boxes.” Here’s what you need to know.

1. The Threat Landscape Moves Faster Than Regulation

- Cybercriminals continuously develop new exploits. A lag between awareness of threats and implementation of controls means attackers often outpace slow compliance efforts.

- Recent attacks in Australia and globally demonstrate that even basic gaps—like unpatched applications or weak passwords—can lead to millions in damages within hours, not weeks.

2. Compliance is No Longer Optional—It’s a Commercial Reality

- Insurance and Contracts: Many insurers are now asking for proof of Essential 8 maturity before granting coverage. Similarly, government and enterprise clients may require compliance before contracts can be signed or renewed.

- Regulatory Pressure: The Australian government has signalled an intent to make aspects of the ACSC framework mandatory or part of critical infrastructure protection. Early compliance gives your business flexibility and time to adapt.

3. The Cost of Delay Is Measured in Dollars and Trust

- Business Disruption: A targeted ransomware attack, data breach, or system outage doesn’t just bring technical headaches—it halts your operations and erodes customer confidence.

- Financial Impact: IBM’s 2024 Cost of a Data Breach Report found Australian breaches average $4.3 million—while proactive mitigation (like Essential 8 controls) can reduce this by 30% or more.

- Reputation Loss: With growing media attention on cyber incidents, customers now check for evidence of robust cybersecurity before sharing sensitive data or making high-value purchases.

4. Compliance Is a Process, Not a Project

- The Essential Eight is phased into Maturity Levels—from 0 (limited) to 3 (strong). Many organisations only reach Level 1, giving a false sense of security.

- Accelerated compliance doesn’t mean skipping steps—it means building a solid foundation quickly, then iterating with ongoing improvement.

The Virtuelle Approach: Fast, Expert, Tailored

At Virtuelle, we’ve engineered a streamlined, battle-tested pathway to Essential 8 compliance that doesn’t compromise on depth, quality, or your unique business context.

1. Comprehensive Gap Assessment & Strategic Planning

- Rapidly assess your current state against the ACSC Essential Eight Maturity Model—zeroing in on your weak points and quick wins.

- Benchmark your maturity, map risks, and set realistic but ambitious targets—aligning compliance to both your business objectives and specific industry regulations.

2. Accelerated, Phased Implementation

- Deploy controls in high-impact phases—application whitelisting, patch management, MFA, privilege restrictions, backups, and more.

- Each step is guided by certified, battle-hardened consultants—not generic playbooks—ensuring best practice is tailored to your real world.

3. Ongoing Management & Reporting

- Continuous 24/7 monitoring, incident response, and regular benchmarking—so your compliance and security aren’t one-off achievements, but enduring strengths.

- Clear, actionable reporting and communication that help you secure executive buy-in, support insurance applications, and confidently answer customer audits.

4. Business Value Beyond Compliance

- Reassure your partners and customers with clear, demonstrable security maturity.

- Lower your cyber insurance premiums and meet regulatory obligations with confidence.

- Maximise ROI on security investments and ensure your IT team stays focused on what matters most for your mission

How Can Virtuelle Group Help?

Virtuelle Group: Your Partner for Fast, Sustainable Essential 8 Compliance

- Proven Essential 8 Experience: Advising public, private, and critical infrastructure clients

- Custom Strategic Roadmaps: Actionable guidance focused on your business needs and regulatory landscape

- Automation-First Approach: Deploying best-in-class solutions for lasting compliance

- Continuous Improvement: Regulatory monitoring, incident simulation, and regular cyber health checks

- Maximise Business Value: Build trust, win more contracts, and meet insurance conditions with real cyber maturity

Ready to Fast-Track Your Essential 8 Compliance?

Don’t let uncertainty or complexity stall your cybersecurity program. Book a complimentary Essential 8 cyber health check with Virtuelle Group today to discover practical steps your organisation can take, get in touch today.

Contact us today to learn how Virtuelle Group can deliver the guidance, technical expertise, and ongoing support you need so you can focus on running your business with confidence.

Is your organisation ready to tackle the next major cyber threat—or are unseen gaps putting your business, customers, and reputation at risk?

The Australian Cyber Security Centre’s (ACSC) Essential Eight is no longer just a standard—it’s the defence line separating businesses that thrive from those that fall to cyber incidents. In a fast-evolving threat landscape, delayed compliance can mean lost contracts, damaged trust, and higher insurance costs. Yet, many Australian organisations struggle with slow, piecemeal implementations or overwhelmed IT teams. That’s where Virtuelle makes the difference.

Why Accelerate Your Essential 8 Compliance?

Understanding why speed matters will empower your organisation far beyond just “ticking the boxes.” Here’s what you need to know.

1. The Threat Landscape Moves Faster Than Regulation

- Cybercriminals continuously develop new exploits. A lag between awareness of threats and implementation of controls means attackers often outpace slow compliance efforts.

- Recent attacks in Australia and globally demonstrate that even basic gaps—like unpatched applications or weak passwords—can lead to millions in damages within hours, not weeks.

2. Compliance is No Longer Optional—It’s a Commercial Reality

- Insurance and Contracts: Many insurers are now asking for proof of Essential 8 maturity before granting coverage. Similarly, government and enterprise clients may require compliance before contracts can be signed or renewed.

- Regulatory Pressure: The Australian government has signalled an intent to make aspects of the ACSC framework mandatory or part of critical infrastructure protection. Early compliance gives your business flexibility and time to adapt.

3. The Cost of Delay Is Measured in Dollars and Trust

- Business Disruption: A targeted ransomware attack, data breach, or system outage doesn’t just bring technical headaches—it halts your operations and erodes customer confidence.

- Financial Impact: IBM’s 2024 Cost of a Data Breach Report found Australian breaches average $4.3 million—while proactive mitigation (like Essential 8 controls) can reduce this by 30% or more.

- Reputation Loss: With growing media attention on cyber incidents, customers now check for evidence of robust cybersecurity before sharing sensitive data or making high-value purchases.

4. Compliance Is a Process, Not a Project

- The Essential Eight is phased into Maturity Levels—from 0 (limited) to 3 (strong). Many organisations only reach Level 1, giving a false sense of security.

- Accelerated compliance doesn’t mean skipping steps—it means building a solid foundation quickly, then iterating with ongoing improvement.

The Virtuelle Approach: Fast, Expert, Tailored

At Virtuelle, we’ve engineered a streamlined, battle-tested pathway to Essential 8 compliance that doesn’t compromise on depth, quality, or your unique business context.

1. Comprehensive Gap Assessment & Strategic Planning

- Rapidly assess your current state against the ACSC Essential Eight Maturity Model—zeroing in on your weak points and quick wins.

- Benchmark your maturity, map risks, and set realistic but ambitious targets—aligning compliance to both your business objectives and specific industry regulations.

2. Accelerated, Phased Implementation

- Deploy controls in high-impact phases—application whitelisting, patch management, MFA, privilege restrictions, backups, and more.

- Each step is guided by certified, battle-hardened consultants—not generic playbooks—ensuring best practice is tailored to your real world.

3. Ongoing Management & Reporting

- Continuous 24/7 monitoring, incident response, and regular benchmarking—so your compliance and security aren’t one-off achievements, but enduring strengths.

- Clear, actionable reporting and communication that help you secure executive buy-in, support insurance applications, and confidently answer customer audits.

4. Business Value Beyond Compliance

- Reassure your partners and customers with clear, demonstrable security maturity.

- Lower your cyber insurance premiums and meet regulatory obligations with confidence.

- Maximise ROI on security investments and ensure your IT team stays focused on what matters most for your mission

How Can Virtuelle Group Help?

Virtuelle Group: Your Partner for Fast, Sustainable Essential 8 Compliance

- Proven Essential 8 Experience: Advising public, private, and critical infrastructure clients

- Custom Strategic Roadmaps: Actionable guidance focused on your business needs and regulatory landscape

- Automation-First Approach: Deploying best-in-class solutions for lasting compliance

- Continuous Improvement: Regulatory monitoring, incident simulation, and regular cyber health checks

- Maximise Business Value: Build trust, win more contracts, and meet insurance conditions with real cyber maturity

Ready to Fast-Track Your Essential 8 Compliance?

Don’t let uncertainty or complexity stall your cybersecurity program. Book a complimentary Essential 8 cyber health check with Virtuelle Group today to discover practical steps your organisation can take, get in touch today.

Contact us today to learn how Virtuelle Group can deliver the guidance, technical expertise, and ongoing support you need so you can focus on running your business with confidence.

Top Cybersecurity Predictions for 2025: What Every Business Needs to Know

Consider this scenario: a single, unnoticed vulnerability has the power to cripple an entire organisation overnight. As we move deeper into 2025, this isn’t just a hypothetical situation it’s a very real threat. Cybercriminals are more sophisticated than ever, and rapid technological innovation is opening new doors for exploitation at an unprecedented pace.

Today, cybersecurity goes far beyond traditional defence strategies. It has become a matter of organisational survival in a digital landscape that’s constantly evolving. The risks are higher, the attacks more complex, and the consequences more severe.

So, what are the most pressing cybersecurity challenges organisations face in 2025? Let’s explore the critical issues that are reshaping the security landscape this year.

1. The Growing Attack Surface

The adoption of digital tools, hybrid work environments, and third-party software solutions is dramatically increasing the number of potential entry points for cybercriminals. With organisations relying on a mix of cloud platforms, on-premises servers, and Internet of Things (IoT) devices, maintaining comprehensive oversight has become a significant challenge.

Alarmingly, research from Gartner reveals that only 17% of organisations are able to accurately identify and inventory at least 95% of their assets. This lack of visibility leaves critical gaps in security, providing opportunities for attackers to infiltrate networks and move laterally without detection. As the attack surface continues to grow, organisations must prioritise asset management and visibility to strengthen their overall security posture.

2. The Ever-Evolving Ransomware Landscape

Ransomware attacks are getting smarter. Cybercriminals now use tools like artificial intelligence (AI) and exploit previously unknown security gaps (called zero-day vulnerabilities). While large companies often make headlines, smaller businesses are also being targeted due to weaker security systems.

Ransomware has evolved to become highly organised, with attackers using advanced techniques to bypass traditional defences, causing significant financial and reputational damage.

- Attackers increasingly use AI to automate and scale ransomware attacks.

- Zero-day vulnerabilities are exploited to gain undetected access to systems.

- Smaller businesses, often with weaker security, are becoming prime targets.

“Cybercriminals will increasingly exploit zero-day vulnerabilities, expanding potential entry points and bypassing traditional security measures to deliver more ransomware attacks.”

The rise of ransomware-as-a-service (RaaS) has also lowered the barrier for entry into cybercrime. RaaS platforms allow inexperienced actors to execute sophisticated attacks, further expanding the scope and frequency of ransomware campaigns.

3. Shifting Regulatory Landscapes & Compliance Pressures

New laws and standards are pushing organisations to improve their cybersecurity practices. Rules like the Australian Privacy Act, US CISA guidelines, and international standards like ISO 27001 require businesses to keep better track of their data and systems. Non-compliance is no longer just a regulatory risk; it can erode customer trust and damage brand reputation.

These regulations increasingly demand organisations prove proactive security measures, such as regular risk assessments, incident response plans, and transparent reporting protocols. The move towards harmonised global frameworks is also notable, as businesses working across borders must navigate overlapping requirements. This trend underscores the growing importance of embedding compliance into broader cybersecurity strategies.

4. AI: A Tool and a Threat

Artificial intelligence (AI) is reshaping cybersecurity in ways both beneficial and dangerous. On one hand, AI-powered systems are enabling faster threat detection, automated responses, and predictive analytics that help pre-empt attacks.

On the other, attackers are using the same technology to make cyberattacks more effective. AI is being used to craft phishing emails that are more believable, create deepfakes that impersonate executives, and find vulnerabilities in systems with unprecedented speed.

Key areas where AI poses challenges include:

- Phishing and Social Engineering: AI-generated phishing campaigns are harder to detect, often mimicking legitimate communications flawlessly.

- Deepfakes: Sophisticated fake videos or voice impersonations can be used to manipulate or deceive.

- Autonomous Attacks: AI-driven attacks may run without human intervention, making them faster and more unpredictable.

AI also raises questions about governance. As organisations increasingly deploy AI tools for defence, ensuring these systems are secure, ethical, and free from bias will be critical. Misconfigured or poorly managed AI systems could unintentionally expose sensitive information or introduce new vulnerabilities.

5. Quantum Computing Risks

Quantum computing is poised to revolutionise many fields, but it also presents a major challenge to current cybersecurity frameworks. Traditional encryption methods, which rely on the computational difficulty of certain mathematical problems, could become obsolete as quantum computers develop the ability to solve these problems almost instantly. This means that sensitive data protected today might be vulnerable to decryption in the future.

Even though quantum computing is still in its early stages, attackers are already preparing for its arrival. By harvesting encrypted data now, they hope to decrypt it when quantum capabilities become mainstream.

This threat is particularly concerning for organisations dealing with long-term sensitive information, such as government agencies and healthcare providers. The timeline for quantum threats may still be uncertain, but the need for quantum-resistant encryption standards is becoming increasingly urgent.

6. Supply Chain Vulnerabilities

Supply chains are often complex, involving multiple vendors and third-party services. These interconnected systems create opportunities for attackers to exploit weak links.

A breach in one supplier’s systems can quickly ripple through the network, compromising multiple organisations. Recent years have seen a rise in supply chain attacks targeting software vendors, hardware providers, and even service contractors.

- Common Targets: Software updates, open-source components, and third-party integrations are frequent points of exploitation.

- Impact: Attacks often spread widely before detection, disrupting entire industries or critical infrastructure.

- Growth of Dependency Risks: As organisations increasingly rely on cloud-based solutions, the interconnected nature of supply chains amplifies vulnerabilities.

This growing dependence on third-party software and services demands greater scrutiny of vendor practices and more rigorous security protocols throughout the supply chain.

7. IoT and Edge Devices: A Rising Security Priority

The rapid growth of the Internet of Things (IoT) and edge computing has transformed industries, from healthcare to manufacturing. However, these technologies also introduce vulnerabilities. IoT devices, such as sensors, cameras, and smart appliances, often lack security features. Attackers can exploit these weaknesses to gain entry to larger networks or disrupt critical operations.

Edge computing, which processes data closer to where it is generated, also presents unique challenges. While it improves efficiency, decentralising data processing increases the number of potential attack points.

In industries like healthcare, where IoT and edge devices manage sensitive patient data, a breach could have catastrophic consequences. The growing attack surface created by these technologies underscores the need for better security practices and standards.

Summary

As we look ahead to the cybersecurity landscape of 2025, it’s clear that organisations must remain proactive and adaptable. The risks are evolving from increasingly sophisticated ransomware attacks and tightening regulatory requirements to the disruptive potential of AI and quantum computing. Understanding these emerging threats is the first step toward building effective defences.

Success in this environment requires more than just awareness; it demands a strategic, well-structured approach to IT and security management. By investing in expert guidance and leveraging advanced, customised tools, businesses can strengthen their defences and maintain operational stability even as the threat landscape grows more complex.

Ultimately, preparation is key. Organisations that prioritise resilience and continuous improvement will not only be able to withstand cyber threats, but also position themselves to thrive in the digital future.

How Can Virtuelle Group Help?

Navigating the rapidly evolving cybersecurity landscape of 2025 requires more than just reactive measures it demands a strategic, proactive approach. Virtuelle is uniquely positioned to help your organisation address today’s most pressing security challenges with expertise, advanced technology, and a partnership mindset..

1. Implementing a Strategic Cyber Maturity Plan

Virtuelle partners with your organisation to develop and execute a tailored cyber maturity roadmap.

2. Proactive Threat Detection & Response

Leverage advanced AI-driven monitoring and expert support for early detection and rapid response to cyber threats.

3. Regulatory Compliance Support

Navigate complex regulations with expert guidance on risk assessments, policy development, and audit readiness.

4. Supply Chain Security

Assess and mitigate third-party risks to protect your organisation from supply chain vulnerabilities.

5. Future-Ready Cybersecurity

Prepare for emerging technologies and threats with strategic advice on quantum-safe encryption and AI security.

Are you confident your cybersecurity strategy is prepared for the evolving threats of 2025? Let Virtuelle help you build a stronger defence get in touch today.

Contact us today to learn how Virtuelle Group can deliver the guidance, technical expertise, and ongoing support you need so you can focus on running your business with confidence.

Why All Mid-Sized Businesses Should Consider ISO27001 Compliance

Today, ISO27001 has become a crucial requirement for mid-sized businesses across different industries, especially those handling sensitive data. Organisations involved in supply chains, government contracts, financial services, and healthcare now recognise its value in safeguarding critical information and maintaining trust.

As cyber threats evolve and regulations become stricter, businesses without effective information security measures face financial loss, reputational harm, and lost opportunities.

What is ISO27001 and why is it Important?

ISO27001 is an internationally recognised framework designed to help businesses protect sensitive information through a structured risk management process. It ensures companies implement security measures to reduce cyber threats, prevent data breaches, and follow legal requirements. For mid-sized businesses, it enhances resilience, builds trust with customers, and creates new business opportunities.

The Benefits of ISO27001 for Mid-Sized Businesses

1. Managing Cyber Risks and Strengthening Business Resilience

Cyber threats continue to grow, and mid-sized businesses often lack the security infrastructure to keep up. ISO27001 provides a structured approach by requiring organisations to find vulnerabilities, assess risks, and establish appropriate security controls (Clause 6.1.2-6.1.3). This ensures threats are addressed before they become critical issues.

Some of the key measures businesses should implement include:

- Access restrictions that limit unauthorised access to sensitive data and systems (Annex A.9).

- Encryption protocols that protect data integrity, ensuring that even if breached, information remains unreadable to attackers (Annex A.10).

- Incident response planning that ensures organisations can detect, manage, and recover from cyber incidents effectively (Annex A.16).

- Business continuity strategies that help businesses maintain essential operations during cyberattacks or system failures (Annex A.17).

2. Meeting Supply Chain and Government Security Expectations

Businesses that supply goods or services to government entities or large enterprises are expected to meet strict security requirements. Failure to comply can result in lost contracts and missed opportunities. ISO27001 helps organisations align with these security expectations by mandating strong supplier risk management practices and regulatory compliance.

Companies looking to work with regulated industries should focus on:

- Supplier security evaluations to ensure third-party vendors do not introduce vulnerabilities into the supply chain (Annex A.15).

- Regulatory compliance frameworks that align business operations with industry and legal requirements (Annex A.18).

- ISO27001 certification requirements, which are increasingly becoming a prerequisite for securing government contracts and enterprise partnerships.

3. Strengthening Customer Trust and Reputation

Consumer confidence is built on the assurance that businesses handle sensitive information securely. A single data breach can damage a company’s reputation, leading to lost customers and diminished credibility. ISO27001 establishes clear policies for protecting customer data, reducing the risk of breaches.

- Businesses can maintain trust and credibility by:

- Implementing security policies that define how customer data is handled and protected (Annex A.5).

- Providing cybersecurity awareness training for employees to minimise human error and improve security culture (Annex A.7.2.2).

- Applying data classification controls to restrict access to sensitive information, ensuring only authorized personnel can handle confidential data (Annex A.8.2.1).

4. Simplifying Compliance with Legal and Regulatory Requirements

Compliance with industry regulations can be complex and time-consuming. ISO27001 simplifies this by aligning security policies with global legal frameworks, helping businesses avoid regulatory fines and legal disputes.

- To maintain compliance and avoid penalties, organisations should focus on:

- Meeting global security standards, including GDPR, HIPAA, and Australia’s Privacy Act (Annex A.18.1.1).

- Establishing data retention policies that define how long sensitive information is stored and when it must be securely deleted (Annex A.8.3).

- Implementing privacy controls that prevent unauthorised access and misuse of personal data (Annex A.8.2.3).

5. Improving Security Processes and Managing Costs

Beyond security, ISO27001 helps businesses improve efficiency and reduce costs. Without clear security policies, organisations may overspend on solutions that do not address their actual risks. The standard provides a framework for prioritising security investments based on risk assessments.

To optimise security spending and minimise costs, businesses should:

- Conduct regular security audits to identify vulnerabilities and improve defences (Clause 9.2).

- Implement real-time monitoring to detect and mitigate threats before they escalate (Annex A.12.4).

- Allocate resources strategically to focus investments on the most critical security risks (Annex A.6.1.2).

Summary

ISO 27001 is no longer just for enterprise firms. As businesses expand, work with regulated industries, and handle increasing amounts of sensitive data, adopting a structured security framework is essential. Companies that invest in ISO27001 compliance not only strengthen their security but also gain a competitive advantage.

For mid-sized businesses, ISO27001 is both a shield against cyber threats and a strategic enabler for growth, efficiency, and trust. The most successful implementations are driven by leadership, tailored to business needs, and focused on continuous improvement.

Mid-sized businesses that act now will be better positioned to meet future security demands while maintaining operational stability and customer trust.

How Can Virtuelle Group Help?

Virtuelle Group offers a comprehensive suite of services to guide your business through every stage of ISO27001 compliance, ensuring your information security management system (ISMS) meets the highest standards and delivers lasting value.

1. Gap Analysis & Roadmap Development

Virtuelle Group collaborates with your stakeholders to understand your current environment and business objectives.

2. Remediation & Implementation

Our team provides hands-on support to address vulnerabilities and close compliance gaps.

3. Internal Audits & Certification Preparation

Virtuelle Group conducts internal audits to verify compliance and readiness for external certification.

4. Privacy & Regulatory Guidance

Virtuelle Group helps you understand and meet your obligations, minimising legal and reputational risk.

5. Ongoing Compliance & Security Management

Security is not a one-off project. We offer ongoing vulnerability remediation, regular reviews, and continuous improvement services.

Ready to achieve ISO27001 compliance and unlock new opportunities?

Contact us today to learn how Virtuelle Group can deliver the guidance, technical expertise, and ongoing support you need so you can focus on running your business with confidence.

7 Ways AI is Transforming Decision-Making in Modern Businesses

Businesses today face growing complexity in decision-making as they navigate evolving market demands and technological advancements. Artificial Intelligence (AI) has become a critical tool for enhancing business performance through smarter, data-driven decisions.

With capabilities such as advanced data analysis, predictive insights, and process automation, AI can help organisations remain competitive and adaptive. This article explores seven impactful ways AI can reshape business decision-making.

1. Enhancing Data-Driven Decisions

Data-driven decision-making has become essential in today’s competitive landscape. Extracting meaningful insights from extensive data sources can be challenging without advanced technology. AI-powered systems can analyse large datasets, identify patterns, and generate actionable insights that improve business strategies.

For example, retailers can use AI to predict product demand by considering multiple data points such as historical sales, store visit trends, planned store openings, and cyclical market factors . This approach helps optimise inventory management by ensuring high-demand products are in stock while minimising surplus inventory, reducing costs and improving profitability.

2. Strengthening Risk Management

Effective risk management depends on predicting and mitigating potential threats. AI-powered monitoring systems can continuously evaluate operational data, flagging suspicious patterns that might signal emerging risks. This proactive approach enables businesses to respond before issues escalate.

For instance, financial institutions can deploy AI to detect fraudulent transactions by analysing real-time account activity. An AI system could recognise unusual withdrawal patterns in customer accounts and automatically trigger security alerts and enable quicker fraud prevention.

3. Boosting Operational Efficiency

Operational efficiency depends on streamlined workflows and reduces manual tasks. AI can automate repetitive tasks, reduce processing times, and minimise human error, allowing businesses to reallocate resources to higher-value projects.

Consider a logistics company using AI to optimise delivery routes. An AI-driven system could analyse live traffic data, weather forecasts, and driver availability to recommend the fastest and most cost-effective delivery schedules.

4. Personalising Customer Engagement

Meeting customer expectations requires personalised interactions. AI-driven tools can help analyse customer behaviour to deliver tailored marketing campaigns, product recommendations, and support services.

An e-commerce platform, for example, could use AI to suggest products based on browsing habits, recent purchases, and product reviews. This tailored shopping experience could increase conversion rates while enhancing customer loyalty.

5. Supporting Strategic Planning

Long-term success depends on strategic foresight. AI-powered systems simulate various business scenarios, helping leaders evaluate outcomes and choose the best strategies based on predictive insights.

Energy companies can use AI-driven predictive models to anticipate electricity demand based on weather patterns and historical usage data. This insight can inform production schedules, ensuring energy is supplied efficiently while reducing waste.

6. Enhancing Employee Productivity

Repetitive tasks can drain employee productivity. AI-powered tools take over these tasks, enabling employees to focus on creative problem-solving, innovation, and strategic initiatives.

For example, marketing teams can use AI-driven content generators such as Microsoft Co-Pilot to craft initial campaign drafts based on audience data. Meanwhile, project management platforms powered by AI can schedule tasks, set deadlines, and track progress, helping teams stay on track.

7. Enabling Real-Time Decision-Making

Fast-changing business environments require immediate responses. AI-powered systems can process live data, enabling companies to make real-time adjustments and remain competitive.

Let’s say a hospitality company might use AI to implement booking management systems and adjust room rates or offer special rates based on real-time booking trends. If the system detects a spike in the demands for accommodations during a local event, it could auto matically increase room rates or promote last-minute packages to maximise occupancy and revenue.

Maximising Business Potential with AI

Successfully adopting AI requires businesses to find the right tools and strategies. Tools such as Microsoft Foundry and Microsoft Fabric are two powerful platforms designed to simplify AI adoption through advanced model management, data integration, and operational scalability.

Foundry focuses on enabling developers and data scientists to build, deploy, and manage AI models at scale, while Microsoft Fabric consolidates AI tools to ensure that data is clean, validated, and ready for actionable insights.

Businesses can unlock AI’s full potential by using tools that help drive smarter decisions and enhance competitiveness. Investing in AI-driven solutions empowers organisations to operate more efficiently, respond to market changes faster, and maintain long-term growth in a technology-driven world.

How Can Virtuelle Group Help?

Virtuelle can empower your business to leverage AI for smarter decision-making by providing tailored solutions that address your unique challenges. With Virtuelle’s expertise, your organization can:

- Automate data analysis to uncover actionable insights quickly and accurately

- Streamline operations by integrating intelligent automation into everyday workflows

- Improve customer experiences through personalised recommendations and faster response times

- Strengthen risk management by identifying potential issues before they escalate

- Drive innovation by identifying new opportunities and optimising processes using AI

Contact us today to learn how Virtuelle Group can help your business stay ahead of the curve, making confident, data-driven decisions in a rapidly evolving landscape.

Employee Mistakes: 5 Critical DLP Gaps Putting Your Organisation at Risk

Discover how poor Data Loss Prevention leaves organisations vulnerable to employee errors, insider threats, and security breaches—and learn how the right DLP strategies can protect sensitive data before it’s too late..

The Role of Employee Errors in Data Loss

Data Loss Prevention (DLP) strategies are designed to safeguard sensitive data, but when poorly implemented, they fail to account for employee errors—one of the leading causes of data loss. Here’s how these errors, coupled with insider threats, can compromise Organisational security:

1. Accidental Exposure of Sensitive Data

Employees often unintentionally mishandle sensitive data, such as sending confidential files to the wrong recipient or uploading documents to unsecured platforms. These errors can lead to data breaches, reputational harm, and regulatory penalties.

To prevent this, implement automated DLP policies that flag or block data transfers containing sensitive information. For example, a DLP system can automatically detect if an employee attempts to send an email containing unencrypted financial data outside the Organisation and prevent the action.

2. Overly Broad Data Access

When employees have access to more data than their roles require, the risk of accidental leaks or intentional misuse increases significantly. For instance, an employee from the marketing team accessing sensitive financial records could lead to unintentional exposure.

A solution here is enforcing a “least privilege” access model. Role-based access controls (RBAC) ensure employees can only access the data they need. Pair this with regular audits to ensure access permissions are current and appropriate.

3. Unauthorised Use of External Devices

Employees often connect external devices, like USB drives, to company systems for convenience, potentially leading to unauthorised data transfers or malware infections.

DLP solutions that monitor and control USB usage can help. For example, you can configure DLP policies to block file transfers to unapproved USB devices while allowing trusted devices to function for business-critical tasks.

4. Mismanagement of Intellectual Property (IP)

Employees working remotely or on personal devices might inadvertently save or share proprietary data on unapproved platforms. For example, saving a product design to a personal cloud drive could lead to IP theft or competitive disadvantages.

Data classification and endpoint DLP tools are critical in addressing this risk. By tagging proprietary files as “highly confidential,” you can ensure they remain encrypted and restricted to approved devices and locations.

5. Delayed Detection of Unexpected Activity

Without robust monitoring, unusual employee activity—such as large-scale downloads of sensitive data—can go unnoticed. This delay gives potential malicious insiders ample time to exfiltrate data.

Deploying DLP tools with real-time monitoring capabilities mitigates this risk. For instance, if an employee suddenly accesses large volumes of restricted data, the system can alert the security team and trigger automated protective actions, such as suspending the account or blocking the activity.

Strengthen Your Data Loss Prevention Strategy

Employee errors are a leading cause of data loss, but their impact can be minimised with the right measures. A combination of automated tools, clear policies, and regular training ensures your Organisation stays protected from these common risks.

How Can Virtuelle Group Help?

Virtuelle Group offers tailored DLP solutions designed to safeguard your sensitive data. With their expertise, you can:

- Detect and respond to threats in real time, minimising the risk of breaches.

- Protect endpoints, cloud environments, and remote setups with holistic measures.

- Mitigate risks from human error through expert-led education programs.

Don’t wait for a breach to compromise your business. Partner with Virtuelle Group to strengthen your defences, protect what matters most, and confidently stay ahead of emerging threats. Reach out today to build a proactive, reliable DLP strategy for your organisation.

Contact us today to learn how Virtuelle Group can help you build a proactive, reliable DLP strategy for your organisation..

How AI is Shaping Cybersecurity: Opportunities and Challenges

Artificial intelligence (AI) is rapidly emerging as a powerful tool in cybersecurity. It can monitor networks, identify threats, and respond faster than ever before. However, its adoption comes with challenges. AI can amplify security measures but also increase vulnerabilities. Understanding AI’s advantages and risks is critical for organisations looking to strengthen their defences.

This article explores the benefits of AI, such as faster incident response, improved vulnerability management, and more accurate breach predictions, while highlighting the importance of balancing AI’s advantages with the risks posed by increasingly sophisticated cyberattacks.

The Pros of AI in Cybersecurity

AI Real-Time Threat Detection and Automation

AI systems analyse vast amounts of data to identify suspicious patterns and threats in real time. For example, AI-powered solutions detect malware and zero-day attacks by recognising anomalies before they escalate. Unlike traditional systems that rely on predefined rules, AI can adapt to new threats, offering a dynamic line of defence.

Predictive Modelling for Future Risks

AI uses predictive modelling to identify vulnerabilities and anticipate potential cyber threats. It detects patterns in historical data, enabling organisations to act proactively. For instance, AI can predict advanced persistent threats (APTs), allowing companies to patch weaknesses before they are exploited.

Enhanced Efficiency and Reduced False Positives

Traditional systems often overwhelm IT teams with false positives, causing alert fatigue. AI reduces these false alarms by distinguishing between genuine threats and benign anomalies. This improves response times and ensures critical threats are not overlooked.

Improved Data Protection

AI continuously monitors networks, securing sensitive data from breaches. Australian businesses, which increasingly handle customer data, benefit from AI’s ability to detect unusual activity, such as unauthorised access to confidential files. This reduces the risk of costly data breaches and helps maintain compliance with data protection laws.

The Cons of AI in Cybersecurity

AI-Powered Tools in the Hands of Attackers

Attackers are now using AI to their advantage. Cybercriminals employ AI to automate attacks, create realistic phishing emails, and develop advanced malware. Deepfake technology is a growing threat, as it enables criminals to impersonate individuals, bypassing verification processes. The ACSC warns of evolving tactics, including AI-driven ransomware attacks that are harder to detect.

Bias and Inaccuracies in Detection

AI systems rely on training data, which can sometimes be biased or incomplete. This can result in false positives or missed threats. For example, a biased dataset could cause an AI system to misclassify legitimate activity as suspicious, disrupting business operations. Ensuring high-quality, unbiased data is crucial to avoid these pitfalls.

Privacy Concerns and Ethical Dilemmas

AI processes vast amounts of data, raising privacy concerns. Biometric recognition, for instance, can intrude on individual privacy if misused. Governments and organisations must address ethical questions, such as how much surveillance is acceptable and whether AI decisions can be trusted without human oversight.

High Costs and Dependence on AI Systems

Implementing AI in cybersecurity requires significant investment in technology and skilled personnel. For many Australian SMEs, these costs can be prohibitive, especially when implemented and managed by internally. Additionally, over-reliance on AI may lead to complacency, as organisations risk neglecting the value of human intelligence in identifying nuanced threats.

Case Study: The Commonwealth Bank of Australia

The Commonwealth Bank of Australia (CBA) stands out as a leading example of how AI can transform cybersecurity. In 2021, CBA introduced AI systems to analyse customer behaviour, identifying suspicious activities and recovering over $100 million from scams. This initiative enhanced fraud detection and customer protection.

In 2023, CBA expanded its AI efforts with tools like NameCheck and CallerCheck. NameCheck alerts customers when account details do not match intended payees, while CallerCheck verifies bank representatives’ identities, preventing impersonation scams.

The impact has been significant:

- 50% Reduction in Scam Losses: Halved scam-related losses through AI-driven tools.

- 30% Fewer Fraud Reports: Customers reported fewer fraud incidents.

- Proactive Monitoring: AI analyses 20 million payments daily, issuing 20,000 alerts.

CBA’s AI-driven approach has strengthened fraud prevention, improved operational efficiency, and boosted customer confidence, setting a benchmark for AI success in cybersecurity.

Wrapping Up: Navigating AI’s Role in Cybersecurity

Artificial intelligence is transforming cybersecurity, offering significant advantages like real-time detection, automation, and improved efficiency. However, its potential risks, including misuse by attackers, biases, and high costs, cannot be ignored.

Organisations should combine AI systems with human oversight, invest in high-quality data, and adopt ethical practices to mitigate risks. As cyber threats continue to evolve, understanding both the benefits and challenges of AI will be crucial for building resilient defences. Businesses that take a proactive, informed stance will be better equipped to protect themselves in an increasingly digital world

How Can Virtuelle Group Help?

Virtuelle Group can help businesses harness AI in cybersecurity safely by providing end-to-end services that go beyond just identifying threats-we also support rapid remediation and ongoing protection. Our offerings include cyber security strategy, governance and compliance, penetration testing, managed security services, and incident response, all tailored to your unique needs.

Contact us today to learn how Virtuelle Group can help you navigate the complex landscape of AI in Cybersecurity, ensuring that innovation is balanced with AI’s advantages and the risks posed are mitigated.

Mandatory Ransomware Reporting: What Businesses Need to Know

Cybercrime is an escalating threat to Australian businesses, driving the government to implement stricter measures. The Cyber Security Act 2024, the country’s first standalone cyber security legislation, introduces mandatory ransomware reporting to address the increasing risk. By shifting from voluntary to compulsory reporting, this law aims to provide authorities with accurate data to mitigate cyber threats more effectively and disrupt ransomware operations.

Understanding the implications of this change is crucial for businesses. From reporting obligations to privacy safeguards, this article breaks down what you need to know about mandatory ransomware reporting and how it will impact your organisation.

Understanding the Cyber Security Act 2024

The Cyber Security Act 2024 represents Australia’s first standalone legislation dedicated to cyber security. It provides a clear legislative framework for addressing systemic cyber threats and protecting critical infrastructure. The Act received Royal Assent in November 2024, and various provisions, including mandatory ransomware reporting, are set to take effect by May 2025.

This Act is part of the broader 2023-2030 Australian Cyber Security Strategy, which aims to position Australia as a global leader in cyber security. It introduces several key measures, such as:

- Mandating minimum cyber security standards for smart devices.

- Establishing a Cyber Incident Review Board.

- Enhancing protections under the Security of Critical Infrastructure Act 2018.

However, the mandatory ransomware reporting requirement stands out as the most immediate concern for many Australian businesses.

The Impact of Mandatory Ransomware Reporting to Businesses

The mandatory reporting framework addresses a critical gap in Australia’s cyber security landscape—the underreporting of ransomware incidents. Historically, voluntary reporting mechanisms have failed to provide the government with a comprehensive understanding of the threat landscape. This new measure is designed to disrupt the ransomware business model and prevent cybercriminals from profiting at the expense of Australian businesses.

Who Needs to Report?

Mandatory ransomware reporting applies to businesses with an annual turnover exceeding AUD $3 million, as confirmed in the Cyber Security (Ransomware Reporting) Reporting Rules 2024. This threshold ensures that larger businesses, which are more likely to be targeted by ransomware attacks, comply with the reporting obligations.

Key criteria include:

- The business must operate in Australia and meet the turnover threshold.

- The incident must involve a ransomware payment, either made directly or by a third party on behalf of the business.

What Needs to Be Reported?

Businesses are required to report ransomware payments within 72 hours of making the payment or becoming aware of it. The reporting obligation is triggered only when a ransomware payment is made, not upon receipt of a ransom demand. This means that if a business receives a ransom demand but does not make a payment, it is not required to report the incident under this specific obligation. The report must include:

- Contact and business details of the reporting entity.

- Details about the cyber security incident, including its impact.

- Information about the ransom demand and payment, such as the amount and method of transfer.

- Communications with the extorting entity.

Privacy Safeguards

The Act includes strict provisions to protect the privacy of reporting businesses. Information provided in ransomware payment reports can only be used for specific purposes, such as:

- Assisting the business in responding to the incident.

- Supporting government intelligence and response strategies.

- Advising on national cyber security policy.

Critically, this information is shielded from use in most legal proceedings, ensuring businesses are not penalised for complying with their reporting obligations.

Implementation Timeline and Compliance

The ransomware reporting obligation will come into effect in May 2025, six months after the Act’s Royal Assent. This grace period allows businesses to prepare for compliance. It’s essential for organisations to review their cyber security frameworks, establish reporting protocols, and educate key personnel about the new requirements.

Non-compliance with the mandatory reporting obligation can result in civil penalties, with fines of up to 60 penalty units. However, the government has committed to an education-first approach, prioritising support and engagement with businesses to facilitate compliance.

The Road Ahead for Businesses in Australia

The Cyber Security Act 2024 marks a significant step forward in Australia’s fight against cybercrime. By introducing mandatory ransomware reporting, the government aims to disrupt the ransomware business model and build a stronger, more secure cyber environment. While the new obligations may pose initial challenges, they represent a critical investment in the long-term resilience and security of Australian businesses.

As the mandatory reporting deadline approaches in May 2025, businesses must act now to ensure they are ready to comply. By doing so, they contribute to a safer digital landscape for all.

How Can Virtuelle Group Help?

Virtuelle Group is well-positioned to assist businesses in navigating these changes and ensuring compliance with the new rules.

- Security Framework Review – Assess and strengthen your current cyber security measures to align with best practices and regulatory requirements.

- Reporting Protocols – Develop and implement clear incident response and reporting procedures to meet the 72-hour ransomware payment reporting rule

- Compliance Support – Provide ongoing guidance and managed services to ensure your business meets all new legal obligations and avoids penalties.

Contact us today to learn how Virtuelle Group can help you confidently address the new mandatory ransomware reporting requirements, strengthen your security frameworks, and ensure ongoing compliance with the Cyber Security Act 2024.

AI Compliance: Navigating Future Risks for Businesses and Governments

As AI transforms industries, businesses and governments must navigate emerging risks like data privacy, bias, and security—discover how AI governance can balance innovation with responsibility and compliance.

Artificial intelligence (AI) is changing how businesses and governments operate by enabling faster decisions, improving productivity, and enhancing service delivery. As AI adoption grows, so do concerns about its potential risks. Issues like data privacy, governance, and security have become critical challenges that need careful management.

This article looks at strategies for managing AI risks while ensuring systems stay secure and compliant. It also highlights how organisations can balance technological progress with ethical responsibility.

Understanding AI Compliance: Why It Matters

AI compliance means following laws, ethical standards, and industry guidelines when creating and using AI systems. It ensures that AI tools are safe, fair, and transparent. While AI can automate tasks and improve decision-making, it also brings risks like data breaches, biased results, and unclear accountability.

Industries such as finance, healthcare, and public services face higher compliance demands because of the sensitive data they manage. By understanding these risks, organisations can develop better policies and reduce potential legal or ethical problems.

Emerging Risks in AI-Driven Economies

AI technologies bring unique risks that require active management. Addressing these issues is key to supporting long-term sustainability and fairness.

1. Data Privacy & Security Risks

AI systems process large amounts of personal, financial, and commercial in confidence data, making them attractive targets for cyberattacks. Unsecured AI tools can cause data breaches that expose sensitive information. Businesses must secure data and limit collection to avoid breaching privacy rules.

2. Bias & Discrimination

AI can reinforce biases when it is trained on unfair or incomplete data. For instance, recruitment algorithms may favour certain demographics if the training data lacks diversity. To reduce discrimination, developers should use diverse datasets and regularly check for bias.

3. Transparency & Accountability

Many AI systems work like “black boxes,” making their decision-making process difficult to understand. This creates accountability problems, especially when AI-driven mistakes happen. Businesses should be able to explain how their AI works and facilitate external reviews when necessary.

4. Environmental Risks

Running AI systems can impact energy consumption and raise environmental considerations. Data centres that power AI tools require significant electricity, contributing to environmental concerns. Companies should consider energy-efficient technology and eco-friendly AI practices.

Regulatory Frameworks and Governance Models Taking Shape

Global Regulatory Trends

Governments around the world are setting rules to manage AI-related risks. The EU’s AI Act sorts AI tools by risk level, with tougher rules for critical areas like healthcare and policing. In the U.S., executive orders push AI innovation while addressing privacy and national security concerns.

Australia’s Approach

Australia follows a two-step strategy for AI governance by using voluntary guidelines and considering mandatory rules for high-risk uses. In August 2024, the government introduced the Voluntary AI Safety Standard, which provides guidance on creating safe and ethical AI systems.

In September 2024, Australia proposed mandatory rules for high-risk AI systems affecting public safety, human rights, and legal decisions. This ensures stricter regulation where needed while encouraging responsible AI development.

Voluntary vs. Mandatory Compliance

There is ongoing debate about whether AI compliance should be voluntary or legally required. Voluntary rules offer flexibility but may lack enforcement. Mandatory laws ensure responsibility but can limit innovation if applied too strictly. A balanced approach combining both methods could be the best solution.

Best Practices for Maintaining AI Governance

Effective AI governance ensures that organisations deploy and manage AI systems responsibly while driving business success. Following best practices can help organisations manage AI compliance effectively while supporting business growth.

Cross-Functional Collaboration

AI governance isn’t just an IT issue—it needs input from legal, risk management, ethics, and operational teams. Working together ensures comprehensive oversight, balanced decision-making, and alignment with organisational values.

Staying Updated on Regulations

As AI governance frameworks evolve, businesses must stay informed about industry best practices and emerging guidelines. This includes:

- Monitoring updates from regulatory bodies and industry groups.

- Reviewing and revising internal governance policies regularly.

- Conducting periodic AI audits to ensure adherence to governance principle

Developing Incident Response Plans

Proactive risk management can prevent governance failures. This includes:

- Identifying potential risks related to AI deployment.

- Establishing protocols for issue detection and resolution.

- Regularly reviewing incidents to strengthen governance processes.

The Future of Responsible AI

As AI adoption continues to reshape businesses and governments, ensuring compliance has never been more important. Proactively managing AI risks through clear governance, transparent practices and regulatory adherence can safeguard against legal, financial and ethical challenges.

How Can Virtuelle Group Help?

Businesses and governments must act now by adopting comprehensive AI compliance strategies that balance innovation with accountability. By fostering responsible AI development, organisations can build trust, drive growth and remain resilient in an increasingly AI-powered world.

Virtuelle Group is well-positioned to offer a suite of services that help your organisation manage AI risks, ensure regulatory compliance, and balance innovation with ethical responsibility.

- IT & AI Risk Reviews – strategic analysis of AI/IT environments, compliance gap identification, roadmaps

- AI Governance Frameworks – custom governance strategies, policy development, stakeholder engagement

- Data Security & Privacy – security assessments, cloud compliance, data protection aligned with local regulations

- Compliance Monitoring – regular audits, regulatory tracking, incident response planning

- Training & Change Management – staff workshops, policy rollout, multi-team collaboration

Contact us today to learn how Virtuelle Group can help you navigate the complex landscape of AI governance and compliance, ensuring that innovation is balanced with responsibility and regulatory adherence.

Privacy Act amendment: Impact on Cybersecurity and AI

Learn how the latest Privacy and Other Legislation Amendment Bill 2024 introduced stricter data protection laws, increased penalties, and new AI compliance requirements.

The Australian Privacy Act has undergone significant amendments, coming into effect in late 2024. These changes, combined with the introduction of the Cyber Security Act 2024, impose stricter compliance obligations on businesses handling personal data.

Increased regulatory enforcement, heightened cybersecurity obligations, and new AI-specific compliance requirements create new complexities that all businesses must address to avoid financial penalties, legal liability, and reputational damage.

Understanding the Key Changes in the Privacy Act Amendments

Protection of Personal Information

The Amendment Act clarifies that ‘reasonable steps’ to protect information include implementing ‘technical and organisational measures’. This is effective from 11 December 2024.

Regulatory Powers and Penalties

The OAIC has new powers to issue infringement and compliance notices. Non-compliance with a compliance notice may result in civil penalties. This is effective from 11 December 2024.

Statutory Torte for Serious Invasions of Privacy

Individuals, including employees, can take legal action against organisations or individuals for serious invasions of privacy. This will be effective on or before 10 June 2025.

Automated Decision-Making (AI)

Transparency obligations require organisations to update their privacy policies to disclose when decisions are made using automated processes. This is effective from 10 December 2026.

Other Changes:

- A Children’s Online Privacy Code is to be developed and registered by 10 December 2026.

- Whitelist powers for countries with similar protections to simplify the transfer of personal data.

Tranche 2

Many ‘agreed in principle’ proposals were not included in the original amendment and are expected to be addressed in a second tranche of legislation. These include the removal of the small business exemption for businesses with turnover under $3 million, an exemption for employee records, and reforms to data retention and marketing.

How the Privacy Act Amendments Affect Cybersecurity

The amendments now clarify that ‘reasonable steps’ to protect personal data include ‘technical’ and ‘operational’ measures. Technical refers to physical, hardware and software measures. Operational includes policies, procedures, training and response plans.

Cybersecurity is now a legal obligation rather than best practice. Under the new laws, organisations that experience a data breach may face severe financial and legal consequences if their technical and/or operational defences are deemed not to be ‘reasonable’.

To strengthen cybersecurity compliance for personal data, organisations should consider:

- The extent of personal data held and its level of sensitivity, to assess the risk consequences of a breach.

- How effective existing security policies and procedures are at protecting personal data.

- Physical and cybersecurity measures to protect the organisation from external attack and potential litigation for breach of privacy.

- Response measures to limit access to personal data and recover from a potential breach.

AI-Specific Compliance Requirements

The Privacy Act amendments require businesses to be more transparent and accountable in how they process personal data using AI systems.

Although the new automated decision-making amendments are not due to come into effect until December 2026, organisations should begin to factor in the requirements for existing and new AI models.

A system can be considered to use automated decision making if:

- It performs something substantially and directly related to deciding about an individual

- The decision significantly effects the individuals’ rights or interests, and

- Personal information is used to make the decision.

Organisations will need to provide more transparency via their privacy policies when automated systems are used to make decisions about individuals, including:

- The type of personal information used

- What decisions are made solely by the programs

- Decisions that are substantially and directly towards deciding about an individual.

Organisations using AI for decision-making about individuals should consider:

- Establishing AI governance policies defining data handling and decision-making.

- Keeping detailed records of AI-driven decisions for accountability.

- Conducting regular AI audits to prevent bias and unintended consequences.

Failure to comply with these AI regulations could result in privacy lawsuits, regulatory fines, and reputational damage.

A New Privacy Landscape

Taken together, the combination of Privacy Act Amendments, Cyber Security Act 2024 and expected further legislation in the near future demonstrates that protecting personal data is no longer business-as-usual. It requires a re-examination of current practises today, constant re-alignment with reasonable technical and organisational conduct, and high transparency as AI models are increasingly leveraged.

Disclaimer: Virtuelle Group are experts in Cybersecurity and AI, but we are not legal specialists. While extensive research has been undertaken to ensure the accuracy of the above, it is intended as a high-level summary. You should not rely on it as legal advice and conduct your own due diligence.